The complete dummies guide to experimentation inside the Enterprise.

The University is a unique operating environment to say the least, but there are elements we can learn from other enterprise organisations as well as those connected to educational institutions around the world, that set a gold standard for innovation and product evolution. These insights are drawn from 8 years of engagement with MIT Sloane, PWC, Gartner, Foresters and the NMC Horizon report, among many conversations with industry experts and Startups at every level of their organisational structure.

Analysis takes more work than experimentation :

It's important to emphasise learning from experiments and iterations on experiments over predictive analytics for innovation explorations, you can easily build 4 or 5 mock-ups or mini products with the time and money it would take to set a business analyst loose in the search for requirements. Even more challenging is the limited understanding of requirement analysis in companies that are not statistics shops. There are caveats to this, where you don't know the questions to ask, you can treat the entire predictive and prescriptive analysis approach as the experiment. This is what we did when we delivered the CPC-data framework.

The (sort of) myth of where good ideas come from:

While I am a firm believer in the coffee house hypothesis, good ideas are only half the story.

As enterprises like the University become more and more data driven, we must take a leaf from our academic colleagues and make hypothesis the centre of our experimentation. This isn't about proof of concepts, this is about minimum viable products to prove or disprove a hypothesis. For example, as we currently work towards an exhibition app for a current request, we've already disregarded 3 or 4 approaches via hypothesis. We are also implementing 2 or 3 others at this very moment. One of them (or more) will stick and be proven true, and we will implement it into the delivery pipeline for the project, making the outcome incrementally better for very little work.

The limits aren't the problem:

Learning the maximum amount possible with specific constraints – i.e., time, money, customer segment, technical implementation, etc. – is the goal and limits and constraints are there to be challenged. Often the reason for constraints in long-standing enterprise environments is lost to the mists of time, and healthy challenge of these constraints is part of the innovation pipeline.

From the Sloan report in 2016:

"This highlights a painful insight: The biggest challenges are not technical or financial, but cultural and organizational. At most firms, management overwhelmingly favors planning, programs, projects, and pilots over the real-world benefits of experimental knowledge and insight. Most don’t realize how exponential economics of experimentation can bolster their innovation investment portfolios."

"Executives frequently resist easy opportunities to cost-effectively experiment because they fear challenges to their hard-won professional intuitions and authority. Data-driven digital experiments might undermine pet hypotheses or business perspectives. But preserving, protecting, and defending the status quo may prove even more costly."

Make experiments social:

ICT don’t have to own all experiments. Engaging diverse portfolios in the process, through Scrum, product pipeline engagement or even a chat or dialogues around innovation, inviting comments and critiques, is a path to more holistic understanding of what needs to change. Broadly socialising results with vendors, community, students and more provides both much needed transparency and a feedback loop that adds value rather than criticism.

Prioritisation is hard:

I don't think I can overstate this. No matter what mechanism you use for prioritisation it must marry to organisational strategy. For the University there are 3 pillars, Research, Education and Culture, and the sub strategies under that give us broad brushes with which to paint our experiments. The actual challenge is not prioritising by strategic alignment it is more about balancing tensions that typically emerge between customer-facing managers and technical managers about what to test first. Our Lab is unique in that we are both technical and customer facing, and we have been completely transparent about our experiment choices. You can see exactly the types of initiatives we engage in here, as can every stakeholder.

Perfection is the enemy of Done:

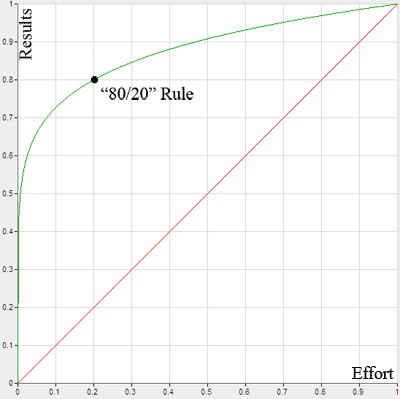

The Pareto Principle or the 80/20 Principle tells us we can't solve 100% of problems for 100% of users. In a product driven world, perfectionist engineering is suppressed in favour of quicker, iterative sensibilities. You solve for 80% of people in the first instance. Outliers can be addressed with time and engagement, but if 80% of your challenge can be solved now, why aren't you doing it?

A focus on core outcomes as opposed to solving everything for everyone. If you manage expectations, people will understand. The University has a bias for "everyone's voice must be heard and acted on" this is in juxtaposition of the organisations capability and funding to deliver that level of outcome. Pivoting to "everyone's voice must be heard" is a better strategy for delivery of innovation, and even waterfall project engagement.

Measure trajectory not just outcomes:

Look, outcomes are important, but a shift in focus organisationally because of experiments with no outcome is almost as important. Helping to align the organisation to innovative and agile practices is as much an outcome as any tool or product delivered from an innovation portfolio.

Be Ethical:

'Don't Be Evil' and 'Do The Right Thing' are Alphabet Co and Googles Mottos and form part of their code of conduct. Set boundaries, and understand what data can mean. Engage with Cybersecurity specialists in design, and privacy experts in execution.